So, here's the final chapter of my little statistical study of IMDb Top 250, which was supposed to involve the critics' opinion on the best... um... I mean the most popular movies of all time. Initially I thought one could gather some interesting correlations and paradoxes to comment on. Unfortunately, I found mostly shit. Obviously one can't expect that an eventual chart made accordingly to critics' ratings, will be more objective than the one made by the IMDb users, because really the only difference is that critics are paid to watch movies systematically and afterwards they tend to express their feelings, limitations, misunderstandings, simple needs, and annoying personal biases in a more or less readable form.

The full IMDb Top 250, rearranged by the RT rating you can find here. The order is by the percentage of positive reviews (for all the critics), and if equal - by the number of reviews. The last column contains the users' rating (the percentage of RT users, who voted 3.5/5 or more).

Few bothering things come out immediately: apparently Toy Story is the most critically acclaimed piece of cinema among those featured in IMDb Top 250 - with 76 positive reviews. It's followed closely by The Wizard of Oz and The Godfather. The movies in red are the first 20 from the IMDb top list and it seems they are more or less evenly distributed along the chart. The graphics below indeed shows no correlation whatsoever:

Forrest Gump, being 21st in IMDb Top 250, has only 71% rating from the critics (68% if only the top critics are taken into consideration). On the other hand, Let The Right In, which will drop out of the chart just after about 5 shitty Marvel comic book adaptations, is with 98% on RT with 163 positive and 4 negative reviews... Go figure...

Probably it's the right moment to mention that here is the chart of the best movies by RT critics' rating, which is even more absurd - the first place is occupied by Toy Story 2 with 161 positive reviews.

Conclusion #1: the critics are just as clueless as everybody else when it comes to judging a movie. Surprise, surprse...

That doesn't mean that we should stop here. May be the things get better if we consider only the top rated critics? Wrong. More than half of the movies in IMDb Top 250 change their ratings by less than 5%. The very top of the chart becomes absolutely ridiculous, as if it's put together by a mentally challenged 8 years old girl: Harry Potter 8, followed by The Artist, Pan's Labyrinth, Finding Nemo, Toy Story 3 and of course - I Want To Shoot Myself. Twice.

The interesting part is that if we listen to the top critics, 18 of the movies in IMDb Top 250 will be considered as rotten, falling under 60%. It's true that for most of them the number of reviews is scarce, but that's not the case for The Prestige and Shutter Island - apparently the attitude of the audience towards those two movies is much more favorable than critics'.

The interesting part is that if we listen to the top critics, 18 of the movies in IMDb Top 250 will be considered as rotten, falling under 60%. It's true that for most of them the number of reviews is scarce, but that's not the case for The Prestige and Shutter Island - apparently the attitude of the audience towards those two movies is much more favorable than critics'.

Conclusion #2: The special selection of the top critics (which is based only on the authority of the media they work for) can't be used for any statistical purposes, because A) the top critics are too few and too unevenly distributed over time; and B) often they have no idea what they are talking about, exactly as their B-rated colleagues.

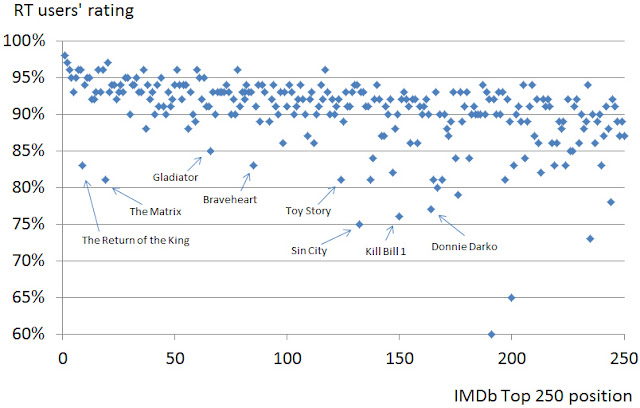

Now, let's consider the RT users. The graphics below shows much better correlation between the IMDb score and the RT one, which is expected as this is essentially the same voting crowd:

However, notice the deviations from the norm, pointed with arrows. How is it possible the first two movies in LOTR trilogy to be rated 95% or so, and suddenly The Return of the King to drop to 83%? Really, are Gladiator, The Matrix, Kill Bill vol. 1 and Donnie Darko worse than 95% of the movies in IMDb Top 250 according to the RT voters? The reason for this paradox becomes clear when we order the movies by the number of RT voters:

Typically a very popular movie on IMDb gets about 500'000 to 700'000 votes. What we have on RT is clearly illogical - the 10 most popular movies have more than 30 millions votes, and then suddenly the 11th one is with "only" 3 millions. WTF? Moreover, all the movies with tens of millions of votes are generally with users' score much lower than the expected one. It seems at some point a bunch of losers hacked the rating system, targeting (what it seems) completely random movies and screwed-up their scores.

Conclusion #3: The user's votes on RT are manipulated and mean shit.

Which leads to the bottom line: Rotten Tomatoes is only an aggregate for movie reviews, and nothing else. Any attempt to pull out of it some meaningful statistical information will fail miserably. RT should:

- close their critics' charts because they are just laughable;

- stop showing the statistics by top critics, because it is pointless;

- and reset all the users' votes, because they are obviously manipulated.

Знаех си, че има нещо гнило в тия домати...

ОтговорИзтриване